Many marketing teams face the same frustration. Content output increases, but organic traffic stays flat or starts to drop. New posts go live every week, yet older pages lose visibility. Engagement falls without clear technical problems. In most cases, keywords are not the issue. Publishing frequency is not the issue either. The real problem starts inside the content workflow. Faster production reduces review time. Pages begin to look and read the same. Readers notice the repetition early and stop engaging. Search systems reflect that behavior over time. AI content marketing fails in these situations because repetition replaces clear thinking, not because automation exists.

What Changed in Content Production?

Most teams did not plan to lower quality. They planned to move faster. Drafts began reaching the publishing stage with fewer reviews. The same prompts started getting reused across topics. Page structure repeated more often than before.

Over time, articles started opening in similar ways. Sections followed the same order. Summaries sounded familiar. Content written for different queries began to feel interchangeable.

This reduced variety. Readers felt it quickly.

Google Search Central Helpful Content System Documentation

https://developers.google.com/search/docs/fundamentals/creating-helpful-content

US20200349181A1 Document Quality Scoring

https://patents.google.com/patent/US20200349181A1

Why Faster Publishing Leads to Decline

Speed alone does not cause performance loss. The problem appears when multiple pages feel the same. Readers scroll less. Time on page drops. Return visits slow.

In many site reviews during 2023 and 2024, organic traffic declined within a few months of faster publishing. No penalties appeared. Indexing remained stable. The drop followed engagement changes, not technical errors.

AI generated content SEO struggles when pages stop offering something new.

AI content marketing exposes these teams once editorial checks disappear. Without variation, differentiation fades.

Who This Impacts The most?

Some teams feel this impact sooner than others. Early stage brands often see faster declines because they lack trust history. Larger sites decline more slowly, but recovery takes longer.

Groups most affected:

• SaaS teams publishing weekly blogs

• D2C brands expanding category pages

• Product teams releasing update posts

• Agencies running batch publishing

Once review checks disappear, repetition spreads. Differentiation fades.

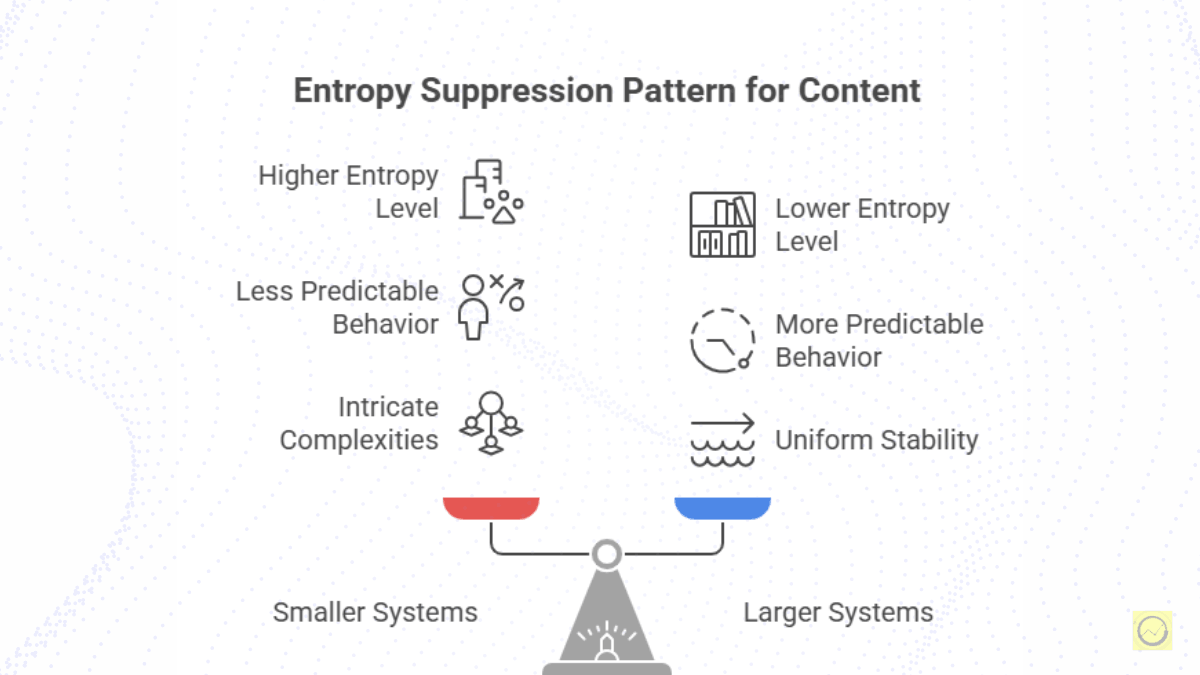

Why Entropy Disappears at Scale

Entropy drops when content follows predictable paths. Language repeats. Structure tightens. Pages begin to sound alike.

Readers sense this within seconds. Engagement falls. AI content quality drops when review becomes light. Detection tools often flag this sameness, but search systems respond to reader behavior, not detector scores.

AI content detection reflects repetition, not intent.

Common triggers:

• Reusing prompt templates

• Training on competitor content only

• Skipping editorial review

• Measuring output instead of reader action

Language Model Behavior and Probability Bias

https://openai.com/research

Team Reaction and Silent Change

Public conversations remain positive. Internal actions look different.

Many teams reduced output. Editors regained approval control. Agencies lowered batch sizes. Publishing slowed without announcements.

These changes helped. AI content ranking stabilized only after variation returned. This pattern appears across multiple regions and industries.

What Marketers Should Do Next

Fixing this issue does not require abandoning automation. It requires control.

Teams that recover usually do a few simple things. They change page structure across similar topics. They write unique openings. They review intent before grammar. They check live pages for repeated phrasing.

Humanize AI content through limits and variety, not polish. AI content optimization should follow engagement signals, not publishing speed.

Operational steps:

• Rotate content structures weekly

• Enforce unique first paragraphs across pages

• Assign editors to challenge intent, not grammar

• Track phrase repetition across published URLs

What Works in Real Teams

In one SaaS case, output dropped by half. Each page followed a different structure. Editors blocked repeated openings and endings.

Within weeks, organic traffic recovered. Bounce rates fell. Rankings stabilized across key pages. AI content writing supported drafts only. Editors controlled final publishing.

SEO AI content improved once automation stopped driving decisions.

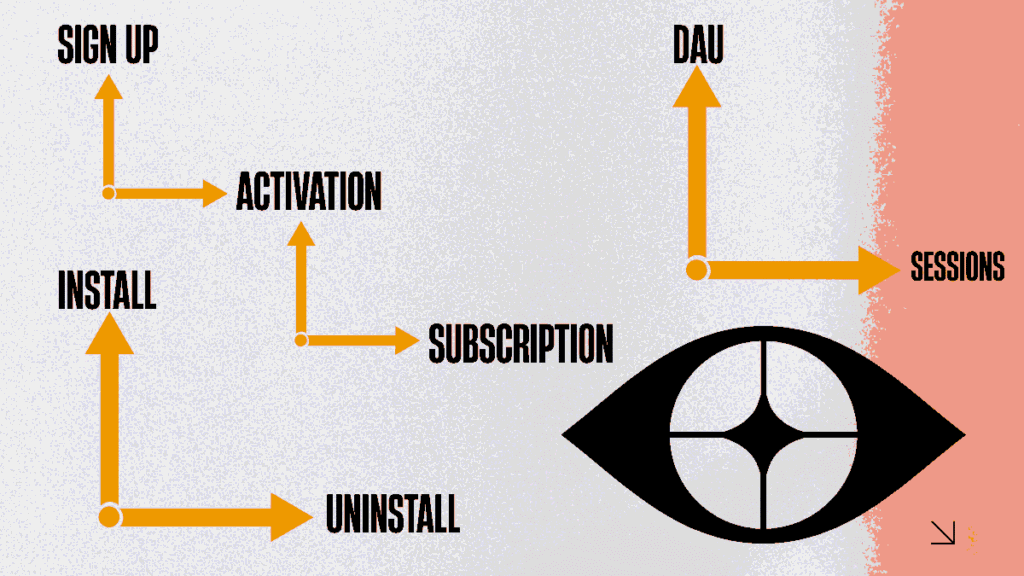

How Search Systems Respond

Search systems track how people interact with content. They look at scrolling, reading time, and return visits.

Entropy helps because it prevents repetition fatigue. SEO AI content performs better when pages explain similar topics in different ways. Pages that repeat logic decline faster. Pages that feel fresh hold attention longer.

Rankings follow these patterns over time.

The Cost of Ignoring Entropy

Teams pay twice when repetition takes over. First through traffic loss. Second through cleanup.

Rewriting costs more than early review. Brand voice weakens. Editors burn out. AI content marketing loses internal trust. Some teams pause publishing to rebuild quality.

Prevention always costs less than recovery.

I documented a hands on system here https://procampaigns.online/i-built-a-free-ai-seo-system-that-runs-itself-exclusive-and-free-tutorial/If You Understood, Follow The Rule

Publishing more content does not rebuild trust. Clear thinking does. Teams that protect variation, protect long term visibility. Teams that ignore it spend time fixing avoidable problems. AI content marketing works only when editorial discipline controls output.

It fails when pages start to look and read the same. Readers disengage early, and search systems follow that behavior over time.

Automation does not hurt SEO by itself. Problems start when it removes variation and intent from content.

Scroll depth drops first, followed by time on page. Rankings usually change later.

Every publish cycle needs review. Skipping review saves time short term but causes losses later.

Using different structures and clear intent across similar topics helps pages stay distinct and perform longer.

They flag predictability, not usefulness. Clean and consistent writing often triggers false positives.

Yes. Human review prevents repetition and protects clarity across pages.

Most sites see early movement within six to ten weeks, depending on scale and changes made.